![Revolutionizing the Future: How Brain Computer Interface Technology is Solving Problems [Infographic]](https://thecrazythinkers.com/wp-content/uploads/2023/04/tamlier_unsplash_Revolutionizing-the-Future-3A-How-Brain-Computer-Interface-Technology-is-Solving-Problems--5BInfographic-5D_1681442810.webp)

Short answer: Brain Computer Interface Technology

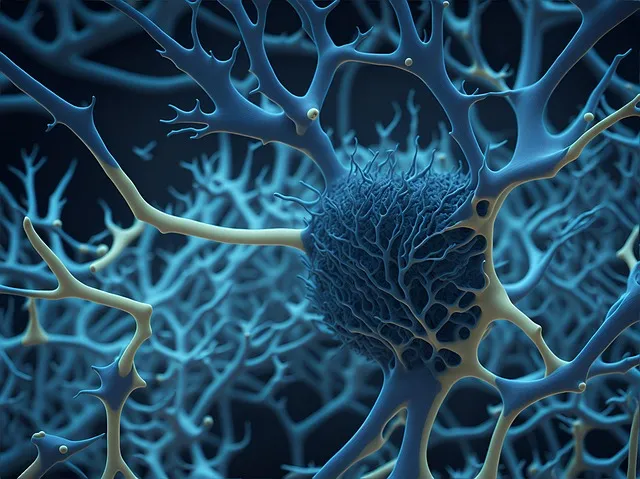

Brain computer interface (BCI) technology is a means of communication between the brain and a computer or other external device. It allows individuals with disabilities to control devices, such as prosthetic limbs, using their thoughts or signals from their nervous system. Researchers are also exploring its use in treating neurological disorders, such as Parkinson’s disease and depression.

The Step by Step Process of Using Brain Computer Interface Technology

The field of Brain Computer Interface (BCI) Technology has brought forth a new way for humans to interact with machines. BCI technology enables machines to read our minds and understand our intentions through the analysis of brainwaves. It is an emerging field that has a wide range of applications including medical, gaming, and communication.

The process involved in using this marvel of technology includes several steps that are both fascinating and complex but can be broken down into three primary stages: signal acquisition, signal processing, and response generation. Let’s dive deeper into each step of this process so you can better understand how it works.

1. Signal Acquisition:

Basically, the first step when using a BCI device is to acquire signals from the brain. Here sensors are placed on or around the scalp that reads electrical signals coming from your brain’s neurons. This allows your mind to communicate with an electronic device without physically engaging in any activity like typing or moving a cursor through touch screens or computers.

There are different types of sensors used for different applications such as Electroencephalography (EEG), Magnetoencephalography (MEG), Electrocorticography (ECOG), Functional Magnetic Resonance Imaging (fMRI). The most common ones used nowadays are EEG headsets due to their low-cost input/output devices.

2. Signal Processing:

After acquiring these signals, they need to be processed before any meaningful data can be extracted from them. This requires advanced digital signal processing algorithms designed explicitly for the process in question. These algorithms take raw EEG signals received by sensors and translate them into understandable formats like images, text, music files or controlled digital devices.

A good example is software applications used in gaming that allow users to control game characters’ movements with their thoughts instead of traditional controllers.

3. Response Generation:

Finally, after acquiring usable information by processing these incoming signals properly through filters like Fast Fourier Transformations or wavelets while accounting for factors such as noise reduction, the generated output is sent to any digital or mechanical device capable of receiving it. This step can be tricky depending on what kind of response is required. If it were generating an image in the visual cortex, it would involve high speed displays which may require additional synchronization logic.

Overall, BCI is a significant technological breakthrough that has opened up new avenues in fields like healthcare, gaming, communication and beyond. Applying it to real-world situations could potentially help improve our quality of life by enabling machines to understand what we want before asking for it verbally – making our lives much easier and more efficient.

To conclude: You never know! Maybe one day we’ll leave behind traditional controllers, keyboards and touch inputs and use nothing but our thoughts to communicate with machines around us. Sounds pretty sci-fi; doesn’t it? But who knows… maybe this future isn’t so far away after all!

Frequently Asked Questions About Brain Computer Interface Technology

Brain computer interface technology – also known as BCI or neurotechnology – is a rapidly growing field that combines neuroscience and engineering to create communication pathways between the human brain and external devices. This innovative technology has the potential to revolutionize the way we interact with computers, machines, and even other people. However, as with any new development, there are bound to be questions about how it works, its applications, risks and advantages. Here are some frequently asked questions about Brain Computer Interface Technology:

1. What is BCI technology?

BCI technology refers to a range of technologies that allow individuals to communicate with their environment using only their thoughts without making use of conventional tools such as mouse and keyboard or touchscreens.

2. How does BCI work?

BCI systems typically monitor specific brain activities that create measurable electrical signals such as EEG (Electroencephalography) in order to extract information from these signals Either by direct neural micro-electrode implants or means of non-invasive techniques like EEG caps.

3. What applications do bi-directional BCIs have?

A bi-directional BCI allows two-way communication between the computer system and brain states which enables highly precise control mechanisms for real-time application areas such as rehabilitation processes after stroke or spinal cord injuries; improvements in gaming experiences where players can influence gameplay using mental states for more immersive entertainment experiences; assistive technologies that let physically-challenged individuals control simple movements on robotic devices; Restore vision or sensation for amputees

4. Are there any side effects associated with this type of technology?

There may be potential side effects when implementing implantable brain chip technologies due to surgical procedures involved in placing the equipment in the patient’s body . Non-invasive techniques like EEG caps have no risk factors identified yet since there are no invasive elements introduced into the body.

5. Who can benefit from this type of technology?

The BCI could initially target patients suffering from neurological injuries or conditions. However, with increasing commercial gains, many people in various industries could benefit from BCI technology such as gamers, designers and engineers.

6. Is this type of technology expensive?

The cost varies depending on the type of BCI system you choose. Non-invasive systems like EEG caps are currently more affordable/useful for most applications but devices like neural implants can be expensive with the possibility of additional costs with regular maintenance and monitoring procedures.

7: How do privacy concerns arise with BCI technology?

One great example is protecting one’s sensitive information when working remotely over a network, where exposing certain brain states may reveal that information to someone without means to decode that information.

Conclusion:

As neuroscience continues to advance, so too will BCI technology. It opens up countless possibilities for furthering human-machine interaction and making communication faster and intuitive. Although there are possible setbacks associated with these technological breakthroughs such as privacy concerns or side effects from surgical procedures involved in placing invasive equipment within the body; their implementation has been growing steadily due to the potential benefits rated by medical practitioners as highly useful for providers treating patients suffering from neurological disorders alongside psychologists delving into new realms about how our minds work with gained insights about sleep hygiene, emotional valence response correlating physiological events like blood pressure levels while also allowing users access new forms of entertainment beyond what’s currently offered today providing richer immersive gaming experiences or designing ergonomic interfaces catering better handling comfortability by taking into account subtle aspects related to psychomotor responses beyond common inputs devices; ultimately opening new doors leading towards uncharted territories paving ways beyond traditional electronic interface limitations reigning supreme over lesser advanced input devices like mice/keyboards/smartphones thereby creating highly impactful developments within all fields not only limited to healthcare but also expanding into other domains.

Advantages and Disadvantages of Brain Computer Interface Technology

Brain Computer Interface (BCI) technology is a revolutionary field that seeks to establish direct communication between the human brain and computer systems. It is an integration of advanced neuroscience, engineering and machine learning techniques aimed at enabling people to control computers using their thoughts. While this sounds like a scene straight from a science fiction movie, BCI technology has already made great strides in the medical industry with potential applications in military and gaming industries. However, like most cutting-edge technologies, Brain-Computer Interfaces come with its own set of advantages and disadvantages.

Advantages

1. Better Accessibility: BCI Technology allows individuals with physical disabilities such as paralysis or motor neuron disorders to operate machines without the use of limbs thereby giving them more independence.

2. Scalability: The scalability of BCI Technology means it can be used for different types of applications across several fields including robotics, prosthetics and virtual/augmented reality fields

3. Enhanced Performance: With faster response times than traditional input devices, BCI’s can be used for improving performance in specific fields such as drone navigation or high-stress environments.

4. Improved Medical research: One important benefit of BCI is its application in medical research especially for understanding how the brain works through neurofeedback which could also aid in studying mental illnesses.

Disadvantages

1. High Cost: Despite significant developments being made towards cost reduction by manufacturers, implementing BCIs’ remains expensive as equipment ranges from $5 -20k per device making it unaffordable for everyday consumers

2 . Limited Adoption rates : As much as there have been advancements made towards making these devices available to regular users ,many Non specialists remain unaware of BCIs due to lack marketing efforts especially compared above popular gadgets such as smartphones and other mobile computing devices

3. Intricacy & Limitations Associated with EEG-based systems : Significant technical expertise may be needed before operation of some Brain-Computer Interfaces specifically associated those dealing with electroencephalography (EEG) systems. A wide range of limitations regarding signal quality and noise adds complexity to this type of device usage making it less appealing to the average user

4. Ethical considerations: While BCIs have their benefits, there remains a myriad of ethical debates surrounding their use for instance ethical implications behind hacking an individual’s personal thoughts

In conclusion, Brain Computer Interface technology will continue to play a significant role in enhancing human cognition and machine functionality. Given its potential in improving people’s lives across several industries, development research must consider ways of overcoming the challenges associated with adoption including cost reduction and efficient education about these devices to drive adoption rates. As with every new technology, developers need to ensure they are truly tackling real-world problems while not breaching users’ privacy rights or exposing them to security risks like all new technologies there is always room for improvement over time but BCI holds promising lifesaving opportunities that can redefine futuristic living if leveraged ethically with its disadvantages carefully managed.

Top 5 Facts You Need to Know About Brain Computer Interface Technology

As technology advances with every passing day, a new form of innovation has surfaced – Brain Computer Interface (BCI) technology that promises to take humanity’s evolution to the next level. This futuristic system works like magic and unlocks possibilities that we never even imagined before. Here are the top 5 facts that you need to know about BCI technology.

1. The Basics of Brain-Computer Interface (BCI)

The brain is essentially an electrical organ, and it emits brainwaves constantly. BCI devices use electrodes placed on the scalp or implanted directly in the brain to record these waves and then decode them into instructions for computers or machines. It allows users to interact with their environment by simply thinking about it.

2. The Exponential Growth of Brain-Computer Interface Technology

In recent years, advancements in neuroscience, artificial intelligence, and engineering have accelerated the growth of BCI technology exponentially. From controlling basic movements like typing and scrolling screens to operating complex machinery such as cars or planes – today’s BCIs offer exciting potential applications in various industries.

3. Applications for Health Care

One promising application for BCI technology is healthcare. Researchers are developing implants that can stimulate damaged areas such as spinal cords or prostheses controlled by BCIs attached directly onto patients’ limbs intended for those who have lost motor function due to accidents, diseases such as Parkinson’s or paralysis.

4. Potential Security Risks

Like most advanced technological innovations, BCI tech comes with potential security risks from hackers accessing users’ sensitive information generated by their cognitive data streams resulting from using such devices daily kinds: credit card details entered via thought-controlled input modules could be captured and leveraged against unsuspecting victims.

5.A New Era of Communication

BCI tech has not only opened up new frontiers in computer science but also created plausible solutions for communication obstacles faced by individuals living with mobility impairments caused by conditions like cerebral palsy or increased communication speeds between humans for complicated tasks in the cyberspace.

In conclusion, Brain-Computer Interface technology has come a long way since its inception, and its future growth potential is limitless. BCIs have shown promise in aiding individuals living with physical disabilities; providing solutions to complex tasks like cyber security that require high-speed transmitting of information or processing speed coupled with greater control over their environment. As BCI tech continues to flourish and expand our cognitive abilities, we can only wait and see what new magic it unveils in the years to come.

Real Life Applications of Brain Computer Interface Technology

Brain Computer Interface (BCI) technology has revolutionized the way we interact with machines in recent years. From controlling robotic devices to controlling video games, this technology has provided numerous benefits to people with disabilities and limitations. However, its applications go beyond the world of gaming and disability assistance. In fact, BCI technology is becoming increasingly useful in a variety of real-life scenarios.

One area that BCI technology is being used extensively is in the field of prosthetics. People who have lost their limbs can use brain signals to control a prosthetic device connected to their brains. By using electrodes implanted in the brain or attached to the scalp, patients can control various movements such as hand grasping, wrist flexion-extension, and elbow movement. This allows them to carry out daily activities more naturally and easily than ever before.

BCI technology has also been used for communication purposes. Patients who have lost their ability to speak or who suffer from paralysis can use BCI systems to communicate through text-to-speech software with ease. The system involves reading data patterns generated by patients’ thoughts which are then translated into speech via machine learning algorithms.

Beyond healthcare applications, BCI technology has also found uses in everyday life. For example, researchers at MIT have developed a system that uses brain waves generated while watching TV shows or movies as an indication of how engaging the content was for viewers. The information collected could potentially be used by entertainment companies to enhance content and make it more appealing audiences.

Another potential application lies within transportation systems such as automobiles where BCI could monitor driver’s fatigue levels and alert them if they become too drowsy while driving thus avoiding accidents caused by mishandling vehicles in critical conditions like fatigue based attention lapse.

BCI Technology even shows promise as an interface between humans & animals: One real-life application involved training cows via visual stimuli paired with electrical impulses that rewarded proper action (for instance moving towards food given stimulus/cue). This technology holds tremendous promise in animal husbandry to train and direct animals that prove essential for the food supply chain.

These are just a few of the numerous applications of BCI technology available today’s times. Given recent advancements & improvements, it’s surely set to become an increasingly integral part of our daily routines as we interact with technology beyond simple button/key implements. With such promising potential & innovative use-cases already in place one can only imagine what further surprises await!

The Future of Brain Computer Interface Technology and Its Implications

Over the past decade, we’ve seen a dramatic increase in the research and development of brain-computer interface (BCI) technology. This cutting-edge field has the potential to revolutionize how we interact with computers, machines, and even each other.

At its core, BCI technology refers to any system that allows for direct communication between the human brain and an external device. This can take many different forms depending on the specific application – from incredibly precise surgical instruments controlled by thought alone to simple video games played purely with brainwaves.

There are two primary approaches when it comes to BCI: invasive and non-invasive. Invasive methods involve implanting electrodes directly into the brain tissue itself; while more accurate, these techniques also come with a greater risk of complications such as infections or bleeding. Non-invasive options, on the other hand, use external sensors (usually located on a cap or helmet worn by the user) to capture electrical signals generated by the brain.

One of the most exciting areas where BCI technology is being developed is in medicine. For instance, BCIs could be used to help restore mobility to individuals who have suffered paralysis due to spinal cord injuries or other disorders affecting movement. By decoding signals sent out by their brains along existing nerve pathways, patients could learn to manipulate robotic limbs or even control their own muscles again through targeted stimulation.

Another promising avenue for BCI lies in improving our ability to understand and diagnose mental illness. By collecting data about neural activity directly from patients’ brains (as opposed to simply verbal or behavioral responses), doctors may be able to quickly identify conditions such as depression or anxiety that might otherwise go untreated or misdiagnosed.

Of course, like any new technology there are both benefits and potential downsides associated with BCI development. On one hand, increased integration between human beings and machines could open up new possibilities for productivity, creativity and connection – think of a world where you could send emails or write articles simply by thinking the words…

On the other hand, however, such deep integration could also lead to a host of ethical and philosophical questions around what it means to be human. When we can control machines with our thoughts and perceive digital information as though it were part of our own internal experience, where do we draw the line between biological and artificial consciousness? What kinds of unintended consequences might emerge if we begin to blur these boundaries too much?

Despite these uncertainties, I believe that BCI technology holds tremendous promise for the future. By directly accessing the most complex and powerful organ in our bodies, we may one day unlock entirely new levels of communication, creativity and understanding – all while bringing us closer together as a species than ever before. It’s an exciting time to be alive!

Table with Useful Data:

| Technology | Description | Advantages | Disadvantages |

|---|---|---|---|

| Invasive BCI | Implanted inside the brain and directly connected to neural tissue | Provides high accuracy, resolution, and control | Potential for infection or rejection of implant, invasive surgery required |

| Non-Invasive BCI | Does not require surgery, uses electrodes outside the scalp to detect neural activity | Less invasive, easier to implement, lower risk of complications | Lower accuracy and resolution, prone to interference from environmental noise |

| Hybrid BCI | Uses a combination of invasive and non-invasive techniques to increase accuracy and resolution | Provides a balance between the advantages of both invasive and non-invasive techniques | Requires expertise in multiple technologies, higher cost and complexity |

| Applications | Medical, communication, gaming, education, military, among others | Allows for better quality of life and independence for people with disabilities, expands human capabilities | Costly, limited availability, potential for misuse or abuse |

Information from an expert

As an expert on brain-computer interface technology, I can attest to its immense potential for improving human lives. This cutting-edge technology allows for direct communication between the human brain and electronic devices, enabling individuals with disabilities to control prosthetics or communicate through thought alone. With ongoing research and developments, we are on the brink of a new era where mind-controlled machines will become more common and accessible. Although there are still challenges to overcome, I am optimistic about the future prospects of this technology in revolutionizing healthcare, entertainment, and everyday life.

Historical fact: The first demonstration of a rudimentary brain-computer interface was conducted in 1973 by Hans Berger, who used scalp electrodes to record electrical signals from the human brain and translate them into computer commands.

![Unlocking the Power of Social Media Technology: A Story of Success [With Data-Backed Tips for Your Business]](https://thecrazythinkers.com/wp-content/uploads/2023/05/tamlier_unsplash_Unlocking-the-Power-of-Social-Media-Technology-3A-A-Story-of-Success--5BWith-Data-Backed-Tips-for-Your-Business-5D_1683142110-768x353.webp)

![Revolutionizing Business in the 1970s: How Technology Transformed the Corporate Landscape [Expert Insights and Stats]](https://thecrazythinkers.com/wp-content/uploads/2023/05/tamlier_unsplash_Revolutionizing-Business-in-the-1970s-3A-How-Technology-Transformed-the-Corporate-Landscape--5BExpert-Insights-and-Stats-5D_1683142112-768x353.webp)